About Me

As a robotics engineer and Master's graduate from Northeastern University, I'm deeply passionate about advancing perception systems for autonomous navigation through cutting-edge research and industry collaboration. My expertise spans the full spectrum of perception technologies, from traditional model-based approaches like factor graph optimization and geometric SLAM to deep learning model-free methods including transformer architectures and end-to-end neural networks.

At Northeastern University's Robust Autonomy Lab (NEURAL) and through industry partnerships with Toyota Research Institute, I've developed production-ready perception systems that bridge the gap between academic research and real-world deployment. My work includes engineering real-time multi-camera visual-inertial SLAM systems with sub-10ms latency, implementing DETR-based panoptic segmentation for 360-degree scene understanding, and creating robust sensor fusion architectures that maintain performance across diverse environmental conditions.

I excel at leveraging both paradigms: employing classical geometric methods like Bundle Adjustment, factor graphs, and visual odometry for precise localization, while simultaneously harnessing modern deep learning through transformer architectures, temporal consistency networks, and multi-modal fusion for semantic scene understanding. This dual expertise allows me to architect comprehensive autonomous navigation systems that combine the reliability of model-based approaches with the adaptability of learned representations.

My ultimate goal is to develop safety-critical perception systems that enable autonomous vehicles to navigate complex, dynamic environments with human-level understanding and beyond-human reliability. I'm always eager to collaborate on innovative projects that push the boundaries of what's possible in autonomous navigation, whether through advancing SLAM algorithms, developing novel computer vision architectures, or creating production-scale perception pipelines for next-generation autonomous systems.

Projects

Real-Time Multi-Camera Visual-Inertial SLAM with Factor Graph Optimization Github

Engineered a production-ready, real-time multi-camera visual-inertial SLAM system in C++ with GTSAM backend optimization, developed in collaboration with Toyota Research Institute. Implemented robust multi-sensor fusion architecture integrating stereo/multi-camera arrays, IMU, and GPS for autonomous navigation in challenging environments. Key innovations include: Factor Graph Optimization with Schur complement-based elimination for computational efficiency, Visual Place Recognition with DBoW2-based loop closure detection, Adaptive Relocalization system with coarse-to-fine pose estimation, and Real-time Performance optimized for large-scale deployment. System demonstrates state-of-the-art accuracy on standard benchmarks while maintaining sub-10ms processing latency. Features comprehensive ROS integration, extensive unit testing, and modular architecture supporting various camera configurations. Successfully deployed for autonomous vehicle navigation, demonstrating robust performance across diverse lighting conditions, dynamic environments, and GPS-denied scenarios.

End-to-End DETR-Based Video Panoptic Segmentation for Autonomous Navigation Github

Developed a production-scale deep learning system for real-time scene understanding in autonomous vehicles using transformer-based DETR architecture. Implemented end-to-end training pipeline for multi-camera panoramic video panoptic segmentation, achieving temporally consistent instance tracking across 5-camera arrays. Key technical contributions include: Custom DETR Architecture adaptation for video sequences with temporal consistency constraints, Multi-Camera Fusion for 360-degree environmental perception, Instance Tracking maintaining object identity across time and camera views, and Real-time Inference optimized for autonomous driving applications. System trained on Cityscapes dataset with custom data augmentation and loss functions for robust 'stuff' vs 'things' classification. Achieved state-of-the-art Panoptic Quality metrics while maintaining real-time performance. Features modular PyTorch implementation with comprehensive evaluation metrics and deployment-ready inference pipeline. Successfully demonstrates advanced computer vision expertise in transformer architectures, multi-modal sensor fusion, and production ML systems for safety-critical autonomous navigation.

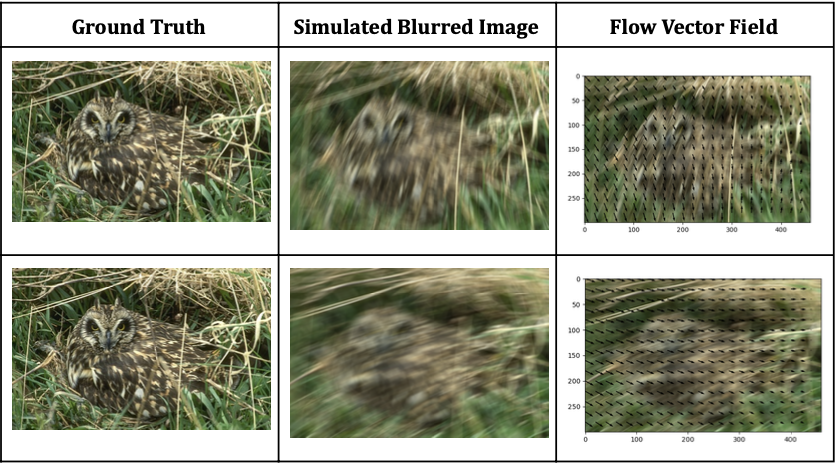

Removing Motion Blur Github

Deblurring images is challenging due to the ill-posed nature of the problem. Traditional methods rely on informative priors, but this paper suggests learning them from the data. By focusing on motion flow maps, a Fully Convolutional Neural Network can effectively recover images. Synthetic data with simulated flows aids in calculating blur kernels for non-blind deconvolution, enhancing image recovery.

Camera-Guided Autonomous Search and Rescue with Modified ROS Exploration Github

Engineered an innovative autonomous search and rescue system using TurtleBot3 that achieved 86% victim detection rate (19/22 April tags) by fundamentally reimagining exploration algorithms. Addressed critical limitation in standard ROS frontier exploration where LiDAR-based exploration missed victims outside camera field-of-view. Developed novel Camera-Aware Exploration Strategy by modifying gmapping and explore_lite packages to incorporate camera constraints into exploration planning. Key innovation: created Binary Camera Mask Integration that overlays camera field-of-view onto exploration maps, ensuring the robot prioritizes areas visible to its camera sensor. Technical implementation included Custom ROS Package Modification of core exploration algorithms, Multi-Sensor Fusion combining 2D LiDAR mapping with pinhole camera detection, and Real-time Path Planning optimized for victim discovery rather than pure exploration efficiency. System demonstrates superior performance compared to standard frontier exploration, crucial for time-critical rescue operations where comprehensive area coverage with visual confirmation is essential for mission success.

Image Mosaicing and Warping Github

This project aims to stitch multiple images together to form a panorama. The process involves feature detection, feature matching, and image warping. The feature detection algorithm used is the Harris Corner Detector. The feature matching algorithm used is the brute force matcher. The image warping algorithm used is the inverse distance weighted interpolation.

Motion Detection Github

In Computer Vision it is often helpful to discard unnecessary parts of images to focus only on essential sections. In the case of doing an analysis of dynamic objects in a static environment, removing the static background and detecting the moving objects can provide an excellent first step. In this project multiple spatial and temporal filtering techniques are implemented in C++ to detect motion in image sequences with static backgrounds. In particular, absolute difference and Prewitt temporal masking are implemente and compared to a derivative of a gaussian filter.

Mobile Robotics Algorithms Github

Implementing some of the algorithms from the Mobile Robotics Course. These include A* Path Planning, Particle Filter, and Iterative Closest Point.